Listening to Language Models

The source code for the sonification and art installation is available at github.com/realBarry123/llama-sonification.

My project asks if we can know certain things by hearing them. Non-physical, simulated things, things that are not inherently audible. For many users, first-hand knowledge of AI language models relies on interactions with abstracted chat interfaces, while the internal processes remain invisible.1 Here, I explore the possibility of perceiving model internals through a different abstraction.

Turning data into sound is known as sonification, an emerging technique of data monitoring and exploration. Designers are faced with a combination of mistrust and fascination from researchers and the general public alike.2 Contributing to such attitudes are an array of prevalent but often unprincipled cultural assumptions about hearing, such as those outlined in Sterne’s “Audiovisual Litany.”3 Though the fascination with sound may not be principled or justifiable, designers aware of such tendencies can use this fascination to their advantage and invite attention and curiosity. This, along with physically grounded differences between seeing and hearing, makes sound a compelling alternative method of perceiving a model.

A language model, at its core, is a series of transformations applied to lists of numbers to allow for next-token prediction based on an existing text sequence. I used a direct scaling from the activation level of each artificial neuron to an audible frequency. The following are clips of sonifications created from three different small language models, each generating eight tokens:

Llama 3.2 1B Instruct (17 layers, 2048 hidden dimensions):

“Question: Let f = -0.”

Pythia 70M Deduped (7 layers, 512 hidden dimensions):

“, and the other two are the same”

Pythia 410M Deduped (25 layers, 1048 hidden dimensions):

“, and the other is the one that”

I showed preliminarily that my method reflects certain quantifiable characteristics about the model and its generated content. Across all models tested, the sonification sounds less chaotic at later training checkpoints, and there is a significant audible difference when generating the first token in a sequence (i.e. after the `<|begin_of_text|>` token in Llama models). Future work will involve adjusting the scaling method to better highlight statistical outliers, as well as a more thorough exploration into the transferability of observations between models.

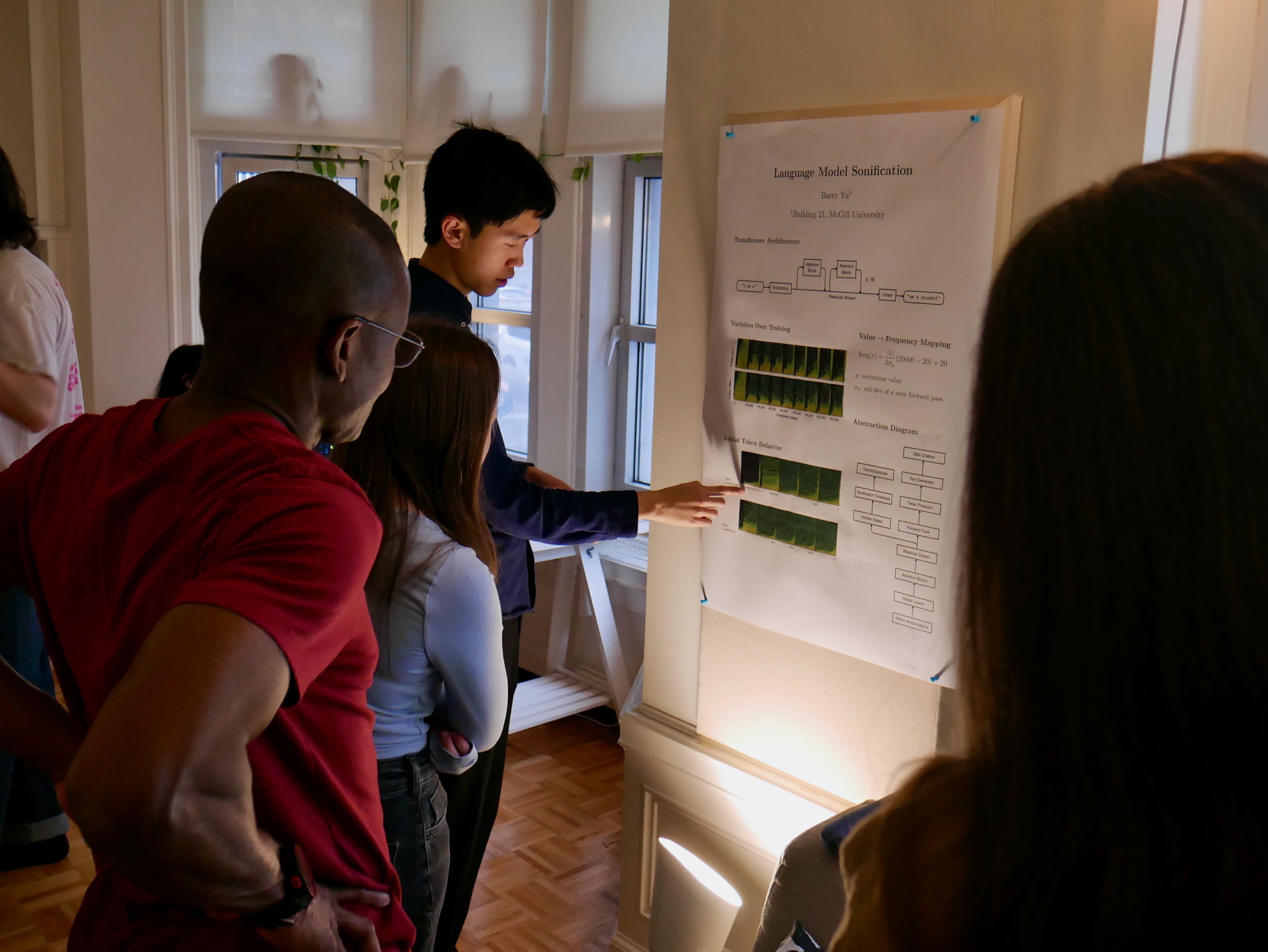

I presented the sonification as a sound installation at the Building 21 scholar showcase on April 9th, 2026. I used a two-channel stereo speaker system placed within a sealed cardboard box, a reference to the “black box” problem of interpreting deep learning algorithms. A language model produces text on a laptop screen while the sonification is generated in real time.

During the showcase, my audience often interpreted the sound as a “heartbeat” or “voice” of the model. What has a voice and a heartbeat might have a body, and a listener can begin to think about AI not as a mind, but as a creature to be encountered.

The source code for the sonification and art installation is available at github.com/realBarry123/llama-sonification.

Notes

1. I use “invisible” rather than “opaque” to draw a distinction between interpreting a model (tricky) and simply perceiving its internals without necessarily being able to explain them.

2. John G. Neuhoff. “Is Sonification Doomed to Fail?” (25th International Conference on Auditory Display, June 23, 2019).

3. Jonathan Sterne. The Audible Past (Durham: Duke University Press, 2003), 15.

.svg)